Expert Systems: Definition, Functioning, and Development Approach - [Part: 2]

0 likes25 views

Expert Systems: Definition, Functioning, and Development Approach - [Part: 2]

1 of 17

![Artificial Intelligence

Expert Systems: Definition, Functioning, and

Development Approach - [Part: 2]

Dr. DEGHA Houssem Eddine

March 17, 2025

Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 1/17](https://image.slidesharecdn.com/ai-part-4compressed-250325210427-f643e6f6/85/Expert-Systems-Definition-Functioning-and-Development-Approach-Part-2-1-320.jpg)

Recommended

Qualitative and Quantitative Research Plans By Malik Muhammad Mehran

Qualitative and Quantitative Research Plans By Malik Muhammad MehranMalik Mughal

╠²

This document provides an overview of qualitative and quantitative research plans. It defines key terms like research plan, discusses the purposes and significance of research plans, and outlines the main components and steps in developing a research plan, including defining the problem, reviewing literature, developing hypotheses and methods, collecting and analyzing data, and communicating results. The document emphasizes that a good research plan provides structure, facilitates evaluation, and guides conducting a successful study within budget and timeline.FAIR & AI Ready KGs for Explainable Predictions

FAIR & AI Ready KGs for Explainable PredictionsMichel Dumontier

╠²

The increased availability of biomedical data, particularly in the public domain, offers the opportunity to better understand human health and to develop effective therapeutics for a wide range of unmet medical needs. However, data scientists remain stymied by the fact that data remain hard to find and to productively reuse because data and their metadata i) are wholly inaccessible, ii) are in non-standard or incompatible representations, iii) do not conform to community standards, and iv) have unclear or highly restricted terms and conditions that preclude legitimate reuse. These limitations require a rethink on data can be made machine and AI-ready - the key motivation behind the FAIR Guiding Principles. Concurrently, while recent efforts have explored the use of deep learning to fuse disparate data into predictive models for a wide range of biomedical applications, these models often fail even when the correct answer is already known, and fail to explain individual predictions in terms that data scientists can appreciate. These limitations suggest that new methods to produce practical artificial intelligence are still needed.

In this talk, I will discuss our work in (1) building an integrative knowledge infrastructure to prepare FAIR and "AI-ready" data and services along with (2) neurosymbolic AI methods to improve the quality of predictions and to generate plausible explanations. Attention is given to standards, platforms, and methods to wrangle knowledge into simple, but effective semantic and latent representations, and to make these available into standards-compliant and discoverable interfaces that can be used in model building, validation, and explanation. Our work, and those of others in the field, creates a baseline for building trustworthy and easy to deploy AI models in biomedicine.

Bio

Dr. Michel Dumontier is the Distinguished Professor of Data Science at Maastricht University, founder and executive director of the Institute of Data Science, and co-founder of the FAIR (Findable, Accessible, Interoperable and Reusable) data principles. His research explores socio-technological approaches for responsible discovery science, which includes collaborative multi-modal knowledge graphs, privacy-preserving distributed data mining, and AI methods for drug discovery and personalized medicine. His work is supported through the Dutch National Research Agenda, the Netherlands Organisation for Scientific Research, Horizon Europe, the European Open Science Cloud, the US National Institutes of Health, and a Marie-Curie Innovative Training Network. He is the editor-in-chief for the journal Data Science and is internationally recognized for his contributions in bioinformatics, biomedical informatics, and semantic technologies including ontologies and linked data.

Hypothesis testing

Hypothesis testingpraveen3030

╠²

The document discusses hypothesis testing and the scientific research process. It begins by defining a hypothesis as a tentative statement about the relationship between two or more variables that can be tested. It then outlines the typical steps in the scientific research process, which includes forming a question, background research, creating a hypothesis, experiment design, data collection, analysis, conclusions, and communicating results. Finally, it provides details on characteristics of a strong hypothesis, the process of hypothesis testing through statistical analysis, and setting up an experiment for hypothesis testing, including defining hypotheses, significance levels, sample size determination, and calculating standard deviation.Minimal viable-datareuse-czi

Minimal viable-datareuse-cziPaul Groth

╠²

The literature contains a myriad of recommendations, advice, and strictures about what data providers should do to facilitate data reuse. It can be overwhelming. Based on recent empirical work (analyzing data reuse proxies at scale, understanding data sensemaking and looking at how researchers search for data), I talk about what practices are a good place to start for helping others to reuse your data.Learning to learn Model Behavior: How to use "human-in-the-loop" to explain d...

Learning to learn Model Behavior: How to use "human-in-the-loop" to explain d...IDEAS - Int'l Data Engineering and Science Association

╠²

The document discusses model interpretation and the Skater library. It begins with defining model interpretation and explaining why it is needed, particularly for understanding model behavior and ensuring fairness. It then introduces Skater, an open-source Python library that provides model-agnostic interpretation tools. Skater uses techniques like partial dependence plots and LIME explanations to interpret models globally and locally. The document demonstrates Skater's functionality and discusses its ability to interpret a variety of model types.Knowledge Graphs & Graph Data Science, More Context, Better Predictions - Neo...

Knowledge Graphs & Graph Data Science, More Context, Better Predictions - Neo...Neo4j

╠²

The document discusses how knowledge graphs and graph data science can provide more context and enable better predictions. It provides examples of using knowledge graphs for interactive browsing of patent and pathway data, cross-species ontology graph queries, identifying relevant COVID-19 genes using graph algorithms, and sub-phenotyping patient populations using graph embeddings. The key message is that knowledge graphs harness relationships to provide deep, dynamic context for analytics and machine learning.Rearch methodology

Rearch methodologyYedu Dharan

╠²

This document outlines the key components of a research report, including an introduction, literature review, methodology, results, discussion, conclusion, and references. It emphasizes that research reports are an essential part of valid research and should allow sufficient time for completion. The document also discusses hypothesis testing, noting the importance of formulating hypotheses, collecting data, and analyzing results to draw conclusions. Finally, it addresses the role of information and communication technology in facilitating research through capabilities like data storage, analysis, and access to secondary sources.Introduction research methodology

Introduction research methodologyUSV Ltd

╠²

The document provides an overview of key concepts in research methodology, including:

- The benefits of research to students and practitioners for designing studies, understanding literature, and participating in evaluations.

- Definitions of key terms like method, methodology, and the differences between them.

- The characteristics of high-quality research like having a clearly defined scope and reproducible design.

- The typical steps in the research process from identifying a problem to interpreting data and revising hypotheses.Introduction Research methodology

Introduction Research methodology panaidu

╠²

This document provides an overview of key concepts in research methodology. It discusses what research is, including that it involves systematically collecting and analyzing data to increase understanding of a phenomenon. It also distinguishes between research methods, which are techniques for gathering evidence, and methodology, which is the underlying theory and analysis of how research proceeds. The document then outlines common characteristics of research and provides guidance on developing high-quality research projects and proposals.Presentation Fuzzy

Presentation FuzzyAli

╠²

This document discusses fuzzy decision trees, which combine decision trees, fuzzy logic, and incremental learning. It presents an algorithm for inducing fuzzy decision trees (FID) in a top-down manner by recursively partitioning training examples until a pruning criterion is met. The algorithm can then incrementally induce the tree by first adapting annotations to new examples without changing structure, and periodically restructuring the tree to correct any issues and ensure optimal test conditions.PPT ON INTRODUCTION TO AI- UNIT-1-PART-3.pptx

PPT ON INTRODUCTION TO AI- UNIT-1-PART-3.pptxRaviKiranVarma4

╠²

Propositional logic is a formal system used in artificial intelligence to represent knowledge as logical statements that can be evaluated as true or false. It represents the logic component of Kowalski's algorithm equation, which specifies the knowledge to be used for problem solving. Propositional logic uses atomic and molecular propositions - atomic propositions are single statements, while molecular propositions combine two or more atomic propositions using logical connectives like AND and OR.sience 2.0 : an illustration of good research practices in a real study

sience 2.0 : an illustration of good research practices in a real studywolf vanpaemel

╠²

a presentation explaining the what, how and why of some of the features of science 2.0 (replication, registration, high power, bayesian statistics, estimation, co-pilot multi-software approach, distinction between confirmatory and exploratory analyses, and open science) using steegen et al. (2014) as a running example.2015 balti-and-bioinformatics

2015 balti-and-bioinformaticsc.titus.brown

╠²

This document discusses ways to incentivize scientists to share their data through self-interest. It describes two existing models where data sharing is successful: oceanographic research consortia that require data sharing, and biomedical research projects that organize data generation and sharing through a common platform. The document proposes a distributed graph database and computing platform that would allow researchers to query diverse public and private datasets, providing immediate returns for data sharing. By making others' data useful to analyze and mine, researchers would be competitively disadvantaged not to share their own data. The goal is to enable open sharing by addressing current problems and remaining agile for future needs.On Machine Learning and Data Mining

On Machine Learning and Data Miningbutest

╠²

The document discusses the differences between machine learning (ML), statistical learning, data mining (DM), and automated learning (AL). It argues that while ML and statistical learning developed similar techniques starting in the 1960s, DM emerged in the 1990s from a merging of database research and automated learning. However, industry was much more enthusiastic about adopting DM techniques compared to AL techniques, even though many DM systems are just friendly interfaces of AL systems. The document aims to explain the key differences between DM and AL that led to DM's greater commercial success.grounded theory.pdf

grounded theory.pdfSamitRajan1

╠²

Grounded theory is a qualitative research method that involves analyzing data to develop a theory that is grounded in the data itself. It involves constant comparison between data and emerging categories to refine categories that closely fit the data. The researcher aims to develop a theoretical framework through an inductive process of coding data line-by-line without preexisting hypotheses, allowing categories to emerge from the data. This contrasts with deductive methods that aim to test hypotheses derived from an existing theory. Grounded theory requires an intimate understanding of detailed qualitative data to develop theory through a continuous process of data collection and analysis.Grounded theory.pdf

Grounded theory.pdfInternational advisers

╠²

Grounded theory is a qualitative research method that involves analyzing data to develop theories grounded in the data itself. It involves constant comparison between data and emerging categories to refine categories that closely fit the data. The researcher aims to develop a theoretical framework through an inductive process of coding data line-by-line without preconceived hypotheses, allowing categories to emerge from the data. This contrasts with deductive theory testing approaches that develop theories prior to data collection. Grounded theory seeks to develop theories closely tied to empirical data rather than abstract speculation.Metodologi 1

Metodologi 1Hendra Grandis

╠²

This document outlines the key components and processes of research methodology, including defining research and distinguishing it from merely gathering information. It discusses the motivation, components, and processes of research, including topic selection, literature review, data acquisition and analysis, and reporting. It emphasizes that research should address an important question and advance knowledge through a systematic process of increasing understanding of a phenomenon.Introduction to Data Mining

Introduction to Data MiningKai Koenig

╠²

A lot of people talk about Data Mining, Machine Learning and Big Data. It clearly must be important, right?

A lot of people are also trying to sell you snake oil - sometimes half-arsed and overpriced products or solutions promising a world of insight into your customers or users if you handover your data to them. Instead, trying to understanding your own data and what you could do with it, should be the first thing youŌĆÖd be looking at.

In this talk, weŌĆÖll introduce some basic terminology about Data and Text Mining as well as Machine Learning and will have a look at what you can on your own to understand more about your data and discover patterns in your data.Selecting the correct Data Mining Method: Classification & InDaMiTe-R

Selecting the correct Data Mining Method: Classification & InDaMiTe-RIOSR Journals

╠²

This document describes an intelligent data mining assistant called InDaMiTe-R that aims to help users select the correct data mining method for their problem and data. It presents a classification of common data mining techniques organized by the goal of the problem (descriptive vs predictive) and the structure of the data. This classification is meant to model the human decision process for selecting techniques. The document then describes InDaMiTe-R, which uses a case-based reasoning approach to recommend techniques based on past user experiences with similar problems and data. An example of its use is provided to illustrate how it extracts problem metadata, gets user restrictions, recommends initial techniques, and learns from the user's evaluations to improve future recommendations. A small evaluationElsevier Industry Talk - WSDM 2020

Elsevier Industry Talk - WSDM 2020Daniel Kershaw

╠²

At Elsevier, a lot of effort is focussed on content discovery for users, allowing them to find the most relevant articles for their research. This, at its core, blurs the boundaries of search and recommendation as we are both pushing content to the user and allowing them to search the worldŌĆÖs largest catalogue of scientific research. Apart from using the content as is, we can make new content more discoverable with the help of authors at submission time, for example by getting them to write an executive summary of their paper. However, doing this at submission time means that this additional information is not available for older content. This raises the question of how we can utilise the authorŌĆÖs input on new content to create the same feature retrospectively to the whole Elsevier corpus. Focusing on one use case, we discuss how an extractive summarization model (which is trained on the user-submitted summaries), is used to retrospectively generate executive summaries for articles in the catalogue. Further, we show how extractive summarization is used to highlight the salient points (methods, results and finding) within research articles across the complete corpus. This helps users to identify whether an article is of particular interest for them. As a logical next step, we investigate how these extractions can be used to make the research papers more discoverable through connecting it to other papers which share similar findings, methods or conclusion. In this talk we start from the beginning, understanding what users want from summarization systems. We discuss how the proposed use cases were developed and how this ties into the discovery of new content. We then look in more technical detail at what data is available and which methods can be utilised to implement such a system. Finally, while we are working toward taking this extractive summarization system into production, we need to understand the quality of what is being produced before going live. We discuss how internal annotators were used to confirming the quality of the summaries. Though the monitoring of quality does not stop there, we continually monitor user interaction with the extractive summaries as a proxy for quality and satisfaction.Open & reproducible research - What can we do in practice?

Open & reproducible research - What can we do in practice?Felix Z. Hoffmann

╠²

- There is a reproducibility crisis in computational research even when code is made available. Out of 206 computational studies in Science magazine since a policy change mandating sharing, only 26 directly provided their code and data. Of those judged potentially reproducible when code was available, more than half still required significant effort to reproduce.

- Making research fully reproducible requires addressing issues like difficult computational environments, long run times, dependency on previous results, and clarity on what is required to reproduce a single finding. Following principles like ensuring code is re-runnable, repeatable, reproducible, reusable, and replicable can help achieve reproducibility. Publishing code on platforms like Zenodo and OSF can also aid reproducibility.Journal Articles Are Not the Only Fruit: Registered Reports

Journal Articles Are Not the Only Fruit: Registered ReportsUniversity of Liverpool Library

╠²

The document summarizes registered reports, an alternative publication format that aims to address reproducibility issues. It discusses:

1) The standard publication process and reproducibility crisis in science due to biases like publication bias, low statistical power, p-hacking, and HARKing.

2) What registered reports are - a two-stage peer review process where the proposed methods and analyses are peer-reviewed before data collection. This removes biases driven by study outcomes.

3) Why registered reports are gaining popularity - they can increase reproducibility, computational reproducibility, and study quality while reducing biases compared to standard publications.

4) An example of an author's experience submitting a registered report to be peer-reviewed in stageRe-Mining Association Mining Results Through Visualization, Data Envelopment ...

Re-Mining Association Mining Results Through Visualization, Data Envelopment ...ertekg

╠²

─░ndirmek i├¦in Ba─¤lant─▒ > https://ertekprojects.com/gurdal-ertek-publications/blog/re-mining-association-mining-results-through-visualization-data-envelopment-analysis-and-decision-trees/

Re-mining is a general framework which suggests the execution of additional data mining steps based on the results of an original data mining process. This study investigates the multi-faceted re-mining of association mining results, develops and presents a practical methodology, and shows the applicability of the developed methodology through real world data. The methodology suggests re-mining using data visualization, data envelopment analysis, and decision trees. Six hypotheses, regarding how re-mining can be carried out on association mining results, are answered in the case study through empirical analysis.Introduction to research

Introduction to researchHKRabby2

╠²

This document provides an introduction to research, including definitions of research, the differences between thesis and project work, steps in the research process such as identifying a topic and finding background information, research as a process involving conceptual approaches and data collection techniques, tracks in research, and qualities of a successful researcher.My experiment

My experimentBoshra Albayaty

╠²

This document outlines the PhD journey of Boshra F. Zopon Al_Bayaty in India. It discusses her coursework, research on knowledge discovery from web search, conferences attended both nationally and internationally, and her research contributions. Her research focused on using a "Master-Slave" model combining multiple supervised algorithms to improve word sense disambiguation and accuracy of over 70%. The document provides details on her research methodology, experiments conducted, results and opportunities for future work improving the model for other languages and applications. It demonstrates the various stages of her PhD journey and research progress.Engineering Data Science Objectives for Social Network Analysis

Engineering Data Science Objectives for Social Network AnalysisDavid Gleich

╠²

A talk I gave at Lawerence Livermore National Labs on how to engineer an objective for a data science application. Natural Language Processing for Data Extraction and Synthesizability Predicti...

Natural Language Processing for Data Extraction and Synthesizability Predicti...Anubhav Jain

╠²

This document discusses using natural language processing and machine learning techniques to extract and analyze synthesis recipes from materials science literature. It presents work using sequence-to-sequence models to extract entities and relationships for the synthesis of gold nanorods and bismuth ferrite from research papers. Decision trees trained on the extracted data are able to reproduce conclusions about the effects of synthesis parameters from literature. However, applying these techniques to predictive synthesis still faces challenges regarding reproducibility, missing information, and lack of negative examples in literature datasets.Organisation Cloud Migration For Core Business Application On OCI Cloud

Organisation Cloud Migration For Core Business Application On OCI CloudRohan Singh

╠²

This presentation provides a comprehensive guide to designing a fault-tolerant, resilient, high-availability (HA), and disaster recovery (DR) architecture on the Oracle Cloud Platform.

What YouŌĆÖll Gain:

Ō£ö’ĖÅ A detailed use case demonstrating the seamless migration of on-premises infrastructure to Oracle Cloud.

Ō£ö’ĖÅ Best practices for resilient, scalable, and cost-optimized cloud solutions.

Ō£ö’ĖÅ Insights into architectural design, HA & DR strategies, and security considerations.

Ō£ö’ĖÅ A valuable resource for those preparing for Solution Architect interviews or planning cloud migration & cost optimization strategies.

Whether you're an IT leader, cloud architect, or DevOps professional, this presentation equips you with the strategic and technical knowledge needed to build and optimize enterprise-grade cloud infrastructure.Mastering NIST CSF 2.0 - The New Govern Function.pdf

Mastering NIST CSF 2.0 - The New Govern Function.pdfBachir Benyammi

╠²

Mastering NIST CSF 2.0 - The New Govern Function

Join us for an insightful webinar on mastering the latest updates to the NIST Cybersecurity Framework (CSF) 2.0, with a special focus on the newly introduced "Govern" function delivered by one of our founding members, Bachir Benyammi, Managing Director at Cyber Practice.

This session will cover key components such as leadership and accountability, policy development, strategic alignment, and continuous monitoring and improvement.

Don't miss this opportunity to enhance your organization's cybersecurity posture and stay ahead of emerging threats.

Secure your spot today and take the first step towards a more resilient cybersecurity strategy!

Event hosted by Sofiane Chafai, ISC2 El Djazair Chapter President

Watch the webinar on our YouTube channel: https://youtu.be/ty0giFH6Qp0More Related Content

Similar to Expert Systems: Definition, Functioning, and Development Approach - [Part: 2] (20)

Introduction Research methodology

Introduction Research methodology panaidu

╠²

This document provides an overview of key concepts in research methodology. It discusses what research is, including that it involves systematically collecting and analyzing data to increase understanding of a phenomenon. It also distinguishes between research methods, which are techniques for gathering evidence, and methodology, which is the underlying theory and analysis of how research proceeds. The document then outlines common characteristics of research and provides guidance on developing high-quality research projects and proposals.Presentation Fuzzy

Presentation FuzzyAli

╠²

This document discusses fuzzy decision trees, which combine decision trees, fuzzy logic, and incremental learning. It presents an algorithm for inducing fuzzy decision trees (FID) in a top-down manner by recursively partitioning training examples until a pruning criterion is met. The algorithm can then incrementally induce the tree by first adapting annotations to new examples without changing structure, and periodically restructuring the tree to correct any issues and ensure optimal test conditions.PPT ON INTRODUCTION TO AI- UNIT-1-PART-3.pptx

PPT ON INTRODUCTION TO AI- UNIT-1-PART-3.pptxRaviKiranVarma4

╠²

Propositional logic is a formal system used in artificial intelligence to represent knowledge as logical statements that can be evaluated as true or false. It represents the logic component of Kowalski's algorithm equation, which specifies the knowledge to be used for problem solving. Propositional logic uses atomic and molecular propositions - atomic propositions are single statements, while molecular propositions combine two or more atomic propositions using logical connectives like AND and OR.sience 2.0 : an illustration of good research practices in a real study

sience 2.0 : an illustration of good research practices in a real studywolf vanpaemel

╠²

a presentation explaining the what, how and why of some of the features of science 2.0 (replication, registration, high power, bayesian statistics, estimation, co-pilot multi-software approach, distinction between confirmatory and exploratory analyses, and open science) using steegen et al. (2014) as a running example.2015 balti-and-bioinformatics

2015 balti-and-bioinformaticsc.titus.brown

╠²

This document discusses ways to incentivize scientists to share their data through self-interest. It describes two existing models where data sharing is successful: oceanographic research consortia that require data sharing, and biomedical research projects that organize data generation and sharing through a common platform. The document proposes a distributed graph database and computing platform that would allow researchers to query diverse public and private datasets, providing immediate returns for data sharing. By making others' data useful to analyze and mine, researchers would be competitively disadvantaged not to share their own data. The goal is to enable open sharing by addressing current problems and remaining agile for future needs.On Machine Learning and Data Mining

On Machine Learning and Data Miningbutest

╠²

The document discusses the differences between machine learning (ML), statistical learning, data mining (DM), and automated learning (AL). It argues that while ML and statistical learning developed similar techniques starting in the 1960s, DM emerged in the 1990s from a merging of database research and automated learning. However, industry was much more enthusiastic about adopting DM techniques compared to AL techniques, even though many DM systems are just friendly interfaces of AL systems. The document aims to explain the key differences between DM and AL that led to DM's greater commercial success.grounded theory.pdf

grounded theory.pdfSamitRajan1

╠²

Grounded theory is a qualitative research method that involves analyzing data to develop a theory that is grounded in the data itself. It involves constant comparison between data and emerging categories to refine categories that closely fit the data. The researcher aims to develop a theoretical framework through an inductive process of coding data line-by-line without preexisting hypotheses, allowing categories to emerge from the data. This contrasts with deductive methods that aim to test hypotheses derived from an existing theory. Grounded theory requires an intimate understanding of detailed qualitative data to develop theory through a continuous process of data collection and analysis.Grounded theory.pdf

Grounded theory.pdfInternational advisers

╠²

Grounded theory is a qualitative research method that involves analyzing data to develop theories grounded in the data itself. It involves constant comparison between data and emerging categories to refine categories that closely fit the data. The researcher aims to develop a theoretical framework through an inductive process of coding data line-by-line without preconceived hypotheses, allowing categories to emerge from the data. This contrasts with deductive theory testing approaches that develop theories prior to data collection. Grounded theory seeks to develop theories closely tied to empirical data rather than abstract speculation.Metodologi 1

Metodologi 1Hendra Grandis

╠²

This document outlines the key components and processes of research methodology, including defining research and distinguishing it from merely gathering information. It discusses the motivation, components, and processes of research, including topic selection, literature review, data acquisition and analysis, and reporting. It emphasizes that research should address an important question and advance knowledge through a systematic process of increasing understanding of a phenomenon.Introduction to Data Mining

Introduction to Data MiningKai Koenig

╠²

A lot of people talk about Data Mining, Machine Learning and Big Data. It clearly must be important, right?

A lot of people are also trying to sell you snake oil - sometimes half-arsed and overpriced products or solutions promising a world of insight into your customers or users if you handover your data to them. Instead, trying to understanding your own data and what you could do with it, should be the first thing youŌĆÖd be looking at.

In this talk, weŌĆÖll introduce some basic terminology about Data and Text Mining as well as Machine Learning and will have a look at what you can on your own to understand more about your data and discover patterns in your data.Selecting the correct Data Mining Method: Classification & InDaMiTe-R

Selecting the correct Data Mining Method: Classification & InDaMiTe-RIOSR Journals

╠²

This document describes an intelligent data mining assistant called InDaMiTe-R that aims to help users select the correct data mining method for their problem and data. It presents a classification of common data mining techniques organized by the goal of the problem (descriptive vs predictive) and the structure of the data. This classification is meant to model the human decision process for selecting techniques. The document then describes InDaMiTe-R, which uses a case-based reasoning approach to recommend techniques based on past user experiences with similar problems and data. An example of its use is provided to illustrate how it extracts problem metadata, gets user restrictions, recommends initial techniques, and learns from the user's evaluations to improve future recommendations. A small evaluationElsevier Industry Talk - WSDM 2020

Elsevier Industry Talk - WSDM 2020Daniel Kershaw

╠²

At Elsevier, a lot of effort is focussed on content discovery for users, allowing them to find the most relevant articles for their research. This, at its core, blurs the boundaries of search and recommendation as we are both pushing content to the user and allowing them to search the worldŌĆÖs largest catalogue of scientific research. Apart from using the content as is, we can make new content more discoverable with the help of authors at submission time, for example by getting them to write an executive summary of their paper. However, doing this at submission time means that this additional information is not available for older content. This raises the question of how we can utilise the authorŌĆÖs input on new content to create the same feature retrospectively to the whole Elsevier corpus. Focusing on one use case, we discuss how an extractive summarization model (which is trained on the user-submitted summaries), is used to retrospectively generate executive summaries for articles in the catalogue. Further, we show how extractive summarization is used to highlight the salient points (methods, results and finding) within research articles across the complete corpus. This helps users to identify whether an article is of particular interest for them. As a logical next step, we investigate how these extractions can be used to make the research papers more discoverable through connecting it to other papers which share similar findings, methods or conclusion. In this talk we start from the beginning, understanding what users want from summarization systems. We discuss how the proposed use cases were developed and how this ties into the discovery of new content. We then look in more technical detail at what data is available and which methods can be utilised to implement such a system. Finally, while we are working toward taking this extractive summarization system into production, we need to understand the quality of what is being produced before going live. We discuss how internal annotators were used to confirming the quality of the summaries. Though the monitoring of quality does not stop there, we continually monitor user interaction with the extractive summaries as a proxy for quality and satisfaction.Open & reproducible research - What can we do in practice?

Open & reproducible research - What can we do in practice?Felix Z. Hoffmann

╠²

- There is a reproducibility crisis in computational research even when code is made available. Out of 206 computational studies in Science magazine since a policy change mandating sharing, only 26 directly provided their code and data. Of those judged potentially reproducible when code was available, more than half still required significant effort to reproduce.

- Making research fully reproducible requires addressing issues like difficult computational environments, long run times, dependency on previous results, and clarity on what is required to reproduce a single finding. Following principles like ensuring code is re-runnable, repeatable, reproducible, reusable, and replicable can help achieve reproducibility. Publishing code on platforms like Zenodo and OSF can also aid reproducibility.Journal Articles Are Not the Only Fruit: Registered Reports

Journal Articles Are Not the Only Fruit: Registered ReportsUniversity of Liverpool Library

╠²

The document summarizes registered reports, an alternative publication format that aims to address reproducibility issues. It discusses:

1) The standard publication process and reproducibility crisis in science due to biases like publication bias, low statistical power, p-hacking, and HARKing.

2) What registered reports are - a two-stage peer review process where the proposed methods and analyses are peer-reviewed before data collection. This removes biases driven by study outcomes.

3) Why registered reports are gaining popularity - they can increase reproducibility, computational reproducibility, and study quality while reducing biases compared to standard publications.

4) An example of an author's experience submitting a registered report to be peer-reviewed in stageRe-Mining Association Mining Results Through Visualization, Data Envelopment ...

Re-Mining Association Mining Results Through Visualization, Data Envelopment ...ertekg

╠²

─░ndirmek i├¦in Ba─¤lant─▒ > https://ertekprojects.com/gurdal-ertek-publications/blog/re-mining-association-mining-results-through-visualization-data-envelopment-analysis-and-decision-trees/

Re-mining is a general framework which suggests the execution of additional data mining steps based on the results of an original data mining process. This study investigates the multi-faceted re-mining of association mining results, develops and presents a practical methodology, and shows the applicability of the developed methodology through real world data. The methodology suggests re-mining using data visualization, data envelopment analysis, and decision trees. Six hypotheses, regarding how re-mining can be carried out on association mining results, are answered in the case study through empirical analysis.Introduction to research

Introduction to researchHKRabby2

╠²

This document provides an introduction to research, including definitions of research, the differences between thesis and project work, steps in the research process such as identifying a topic and finding background information, research as a process involving conceptual approaches and data collection techniques, tracks in research, and qualities of a successful researcher.My experiment

My experimentBoshra Albayaty

╠²

This document outlines the PhD journey of Boshra F. Zopon Al_Bayaty in India. It discusses her coursework, research on knowledge discovery from web search, conferences attended both nationally and internationally, and her research contributions. Her research focused on using a "Master-Slave" model combining multiple supervised algorithms to improve word sense disambiguation and accuracy of over 70%. The document provides details on her research methodology, experiments conducted, results and opportunities for future work improving the model for other languages and applications. It demonstrates the various stages of her PhD journey and research progress.Engineering Data Science Objectives for Social Network Analysis

Engineering Data Science Objectives for Social Network AnalysisDavid Gleich

╠²

A talk I gave at Lawerence Livermore National Labs on how to engineer an objective for a data science application. Natural Language Processing for Data Extraction and Synthesizability Predicti...

Natural Language Processing for Data Extraction and Synthesizability Predicti...Anubhav Jain

╠²

This document discusses using natural language processing and machine learning techniques to extract and analyze synthesis recipes from materials science literature. It presents work using sequence-to-sequence models to extract entities and relationships for the synthesis of gold nanorods and bismuth ferrite from research papers. Decision trees trained on the extracted data are able to reproduce conclusions about the effects of synthesis parameters from literature. However, applying these techniques to predictive synthesis still faces challenges regarding reproducibility, missing information, and lack of negative examples in literature datasets.Recently uploaded (20)

Organisation Cloud Migration For Core Business Application On OCI Cloud

Organisation Cloud Migration For Core Business Application On OCI CloudRohan Singh

╠²

This presentation provides a comprehensive guide to designing a fault-tolerant, resilient, high-availability (HA), and disaster recovery (DR) architecture on the Oracle Cloud Platform.

What YouŌĆÖll Gain:

Ō£ö’ĖÅ A detailed use case demonstrating the seamless migration of on-premises infrastructure to Oracle Cloud.

Ō£ö’ĖÅ Best practices for resilient, scalable, and cost-optimized cloud solutions.

Ō£ö’ĖÅ Insights into architectural design, HA & DR strategies, and security considerations.

Ō£ö’ĖÅ A valuable resource for those preparing for Solution Architect interviews or planning cloud migration & cost optimization strategies.

Whether you're an IT leader, cloud architect, or DevOps professional, this presentation equips you with the strategic and technical knowledge needed to build and optimize enterprise-grade cloud infrastructure.Mastering NIST CSF 2.0 - The New Govern Function.pdf

Mastering NIST CSF 2.0 - The New Govern Function.pdfBachir Benyammi

╠²

Mastering NIST CSF 2.0 - The New Govern Function

Join us for an insightful webinar on mastering the latest updates to the NIST Cybersecurity Framework (CSF) 2.0, with a special focus on the newly introduced "Govern" function delivered by one of our founding members, Bachir Benyammi, Managing Director at Cyber Practice.

This session will cover key components such as leadership and accountability, policy development, strategic alignment, and continuous monitoring and improvement.

Don't miss this opportunity to enhance your organization's cybersecurity posture and stay ahead of emerging threats.

Secure your spot today and take the first step towards a more resilient cybersecurity strategy!

Event hosted by Sofiane Chafai, ISC2 El Djazair Chapter President

Watch the webinar on our YouTube channel: https://youtu.be/ty0giFH6Qp0Graphs & GraphRAG - Essential Ingredients for GenAI

Graphs & GraphRAG - Essential Ingredients for GenAINeo4j

╠²

Knowledge graphs are emerging as useful and often necessary for bringing Enterprise GenAI projects from PoC into production. They make GenAI more dependable, transparent and secure across a wide variety of use cases. They are also helpful in GenAI application development: providing a human-navigable view of relevant knowledge that can be queried and visualised.

This talk will share up-to-date learnings from the evolving field of knowledge graphs; why more & more organisations are using knowledge graphs to achieve GenAI successes; and practical definitions, tools, and tips for getting started.When Platform Engineers meet SREs - The Birth of O11y-as-a-Service Superpowers

When Platform Engineers meet SREs - The Birth of O11y-as-a-Service SuperpowersEric D. Schabell

╠²

Monitoring the behavior of a system is essential to ensuring its long-term effectiveness. However, managing an end-to-end observability stack can feel like stepping into quicksand, without a clear plan youŌĆÖre risking sinking deeper into system complexities.

In this talk, weŌĆÖll explore how combining two worldsŌĆödeveloper platforms and observabilityŌĆöcan help tackle the feeling of being off the beaten cloud native path. WeŌĆÖll discuss how to build paved paths, ensuring that adopting new developer tooling feels as seamless as possible. Further, weŌĆÖll show how to avoid getting lost in the sea of telemetry data generated by our systems. Implementing the right strategies and centralizing data on a platform ensures both developers and SREs stay on top of things. Practical examples are used to map out creating your very own Internal Developer Platform (IDP) with observability integrated from day 1.GDG Cloud Southlake #41: Shay Levi: Beyond the Hype:How Enterprises Are Using AI

GDG Cloud Southlake #41: Shay Levi: Beyond the Hype:How Enterprises Are Using AIJames Anderson

╠²

Beyond the Hype: How Enterprises Are Actually Using AI

Webinar Abstract:

AI promises to revolutionize enterprises - but whatŌĆÖs actually working in the real world? In this session, we cut through the noise and share practical, real-world AI implementations that deliver results. Learn how leading enterprises are solving their most complex AI challenges in hours, not months, while keeping full control over security, compliance, and integrations. WeŌĆÖll break down key lessons, highlight recent use cases, and show how UnframeŌĆÖs Turnkey Enterprise AI Platform is making AI adoption fast, scalable, and risk-free.

Join the session to get actionable insights on enterprise AI - without the fluff.

Bio:

Shay Levi is the Co-Founder and CEO of Unframe, a company redefining enterprise AI with scalable, secure solutions. Previously, he co-founded Noname Security and led the company to its $500M acquisition by Akamai in just four years. A proven innovator in cybersecurity and technology, he specializes in building transformative solutions.How to manage technology risk and corporate growth

How to manage technology risk and corporate growthArlen Meyers, MD, MBA

╠²

Board v management roles, holes, and goals.

How to manage technology risk?A General introduction to Ad ranking algorithms

A General introduction to Ad ranking algorithmsBuhwan Jeong

╠²

Details of AD ranking algorithms and beyondAchieving Extreme Scale with ScyllaDB: Tips & Tradeoffs

Achieving Extreme Scale with ScyllaDB: Tips & TradeoffsScyllaDB

╠²

Explore critical strategies ŌĆō and antipatterns ŌĆō for achieving low latency at extreme scale

If youŌĆÖre getting started with ScyllaDB, youŌĆÖre probably intrigued by its potential to achieve predictable low latency at extreme scale. But how do you ensure that youŌĆÖre maximizing that potential for your teamŌĆÖs specific workloads and technical requirements?

This webinar offers practical advice for navigating the various decision points youŌĆÖll face as you evaluate ScyllaDB for your project and move into production. WeŌĆÖll cover the most critical considerations, tradeoffs, and recommendations related to:

- Infrastructure selection

- ScyllaDB configuration

- Client-side setup

- Data modeling

Join us for an inside look at the lessons learned across thousands of real-world distributed database projects.SAP Business Data Cloud: Was die neue SAP-L├Čsung f├╝r Unternehmen und ihre Dat...

SAP Business Data Cloud: Was die neue SAP-L├Čsung f├╝r Unternehmen und ihre Dat...IBsolution GmbH

╠²

Inhalt:

Daten spielen f├╝r jede Business-Transformation eine entscheidende Rolle. Mithilfe der SAP Business Data Cloud (BDC) sind Unternehmen in der Lage, s├żmtliche Daten miteinander zu verbinden und zu harmonisieren. Die SAP BDC stellt eine Weiterentwicklung der bisherigen SAP-Datenstrategie dar - mit SAP Datasphere und der SAP Analytics Cloud (SAC) als elementaren S├żulen. Besonders hervorzuheben: Databricks ist als OEM-Produkt in die Architektur integriert. Die SAP BDC kombiniert neue und bestehende Technologien, um Anwendern angereicherte Datenprodukte, fortschrittliche Analyse-Funktionalit├żten und KI-gest├╝tzte Insights-Anwendungen bereitzustellen. Kurz gesagt: Mit SAP BDC schaffen Unternehmen eine zentrale Drehscheibe f├╝r ihre gesch├żftskritischen Daten und legen die Basis f├╝r SAP Business AI.

In unserem Expertengespr├żch erl├żutern Stefan Hoffmann (Head of Cross Solution Management SAP HANA & Analytics bei SAP) und Martin Eissing (Projektmanager bei IBsolution), was es mit der SAP Business Data Cloud genau auf sich hat und welche konkreten Vorteile mit dem neuen Angebot einhergehen. Au├¤erdem zeigen sie auf, wie das erste Feedback der Kunden zur SAP BDC ausf├żllt und welche Wege Unternehmen zur SAP BDC f├╝hren.

Zielgruppe:

- IT-Leiter/IT-Entscheider

- Data Analysts

- Datenarchitekten

- BI-Spezialisten

- Anwender in den Fachbereichen

Agenda:

1. Was ist die SAP Business Data Cloud (BDC)?

2. Einordnung in die SAP-Datenstrategie

3. Voraussetzungen und Mehrwerte der SAP BDC

4. Architektur der SAP BDC

5. Handlungsempfehlungen f├╝r SAP BW-Kunden und SAP Datasphere-Kunden

6. Q&ABuild with AI on Google Cloud Session #5

Build with AI on Google Cloud Session #5Margaret Maynard-Reid

╠²

This is session #5 of the 5-session online study series with Google Cloud, where we take you onto the journey learning generative AI. YouŌĆÖll explore the dynamic landscape of Generative AI, gaining both theoretical insights and practical know-how of Google Cloud GenAI tools such as Gemini, Vertex AI, AI agents and Imagen 3. UiPath NY AI Series: Session 4: UiPath AutoPilot for Developers using Studio Web

UiPath NY AI Series: Session 4: UiPath AutoPilot for Developers using Studio WebDianaGray10

╠²

Welcome to session 4 of the UiPath AI series. In this session, you will learn about UiPath Autopilot for Developers using Studio Web.Digital Nepal Framework 2.0: A Step Towards a Digitally Empowered Nepal

Digital Nepal Framework 2.0: A Step Towards a Digitally Empowered NepalICT Frame Magazine Pvt. Ltd.

╠²

Digital Nepal Framework 2.0: A Step Towards a Digitally Empowered NepalThe Future is Here ŌĆō Learn How to Get Started! Ionic App Development

The Future is Here ŌĆō Learn How to Get Started! Ionic App Development7Pillars

╠²

What is Ionic App Development? ŌĆō A powerful framework for building high-performance, cross-platform mobile apps with a single codebase.

Key Benefits of Ionic App Development ŌĆō Cost-effective, fast development, rich UI components, and seamless integration with native features.

Ionic App Development Process ŌĆō Includes planning, UI/UX design, coding, testing, and deployment for scalable mobile solutions.

Why Choose Ionic for Your Mobile App? ŌĆō Ionic offers flexibility, native-like performance, and strong community support for modern app development.

Future of Ionic App Development ŌĆō Continuous updates, strong ecosystem, and growing adoption make Ionic a top choice for hybrid app development.techfuturism.com-Autonomous Underwater Vehicles Navigating the Future of Ocea...

techfuturism.com-Autonomous Underwater Vehicles Navigating the Future of Ocea...Usman siddiqui

╠²

Imagine a robot diving deep into the ocean, exploring uncharted territories without human intervention. This is the essence of an autonomous underwater vehicle (AUV). These self-operating machines are revolutionizing our understanding of the underwater world, offering insights that were once beyond our reach.

An autonomous underwater vehicle is a type of unmanned underwater vehicle (UUV) designed to operate beneath the waterŌĆÖs surface without direct human control. Unlike remotely operated vehicles (ROVs), which are tethered to a ship and controlled by operators, AUVs navigate the ocean based on pre-programmed instructions or real-time adaptive algorithms.

Dev Dives: Unleash the power of macOS Automation with UiPath

Dev Dives: Unleash the power of macOS Automation with UiPathUiPathCommunity

╠²

Join us on March 27 to be among the first to explore UiPath innovative macOS automation capabilities.

This is a must-attend session for developers eager to unlock the full potential of automation.

¤ōĢ This webinar will offer insights on:

How to design, debug, and run automations directly on your Mac using UiPath Studio Web and UiPath Assistant for Mac.

WeŌĆÖll walk you through local debugging on macOS, working with native UI elements, and integrating with key tools like Excel on Mac.

This is a must-attend session for developers eager to unlock the full potential of automation.

¤æ©ŌĆŹ¤Å½ Speakers:

Andrei Oros, Product Management Director @UiPath

SIlviu Tanasie, Senior Product Manager @UiPathUiPath Automation Developer Associate Training Series 2025 - Session 8

UiPath Automation Developer Associate Training Series 2025 - Session 8DianaGray10

╠²

In session 8, the final session of this series, you will learn about the Implementation Methodology Fundamentals and about additional self-paced study courses you will need to complete to finalize the courses and receive your credential.All-Data, Any-AI Integration: FME & Amazon Bedrock in the Real-World

All-Data, Any-AI Integration: FME & Amazon Bedrock in the Real-WorldSafe Software

╠²

Join us for an exclusive webinar featuring special guest speakers from Amazon, Amberside Energy, and Avineon-Tensing as we explore the power of Amazon Bedrock and FME in AI-driven geospatial workflows.

Discover how Avineon-Tensing is using AWS Bedrock to support Amberside Energy in automating image classification and streamlining site reporting. By integrating BedrockŌĆÖs generative AI capabilities with FME, image processing and categorization become faster and more efficient, ensuring accurate and organized filing of site imagery. Learn how this approach reduces manual effort, standardizes reporting, and leverages AWSŌĆÖs secure AI tooling to optimize their workflows.

If youŌĆÖre looking to enhance geospatial workflows with AI, automate image processing, or simply explore the potential of FME and Bedrock, this webinar is for you!Making GenAI Work: A structured approach to implementation

Making GenAI Work: A structured approach to implementationJeffrey Funk

╠²

Richard Self and I present a structured approach to implementing generative AI in your organization, a #technology that sparked the addition of more than ten trillion dollars to market capitalisations of Magnificent Seven (Apple, Amazon, Google, Microsoft, Meta, Tesla, and Nvidia) since January 2023.

Companies must experiment with AI to see if particular use cases can work because AI is not like traditional software that does the same thing over and over again. As Princeton UniversityŌĆÖs Arvind Narayanan says: ŌĆ£ItŌĆÖs more like creative, but unreliable, interns that must be managed in order to improve processes.ŌĆØ

Presentation Session 2 -Context Grounding.pdf

Presentation Session 2 -Context Grounding.pdfMukesh Kala

╠²

This series is your gateway to understanding the WHY, HOW, and WHAT of this revolutionary technology. Over six interesting sessions, we will learn about the amazing power of agentic automation. We will give you the information and skills you need to succeed in this new era.I am afraid of no test! The power of BDD

I am afraid of no test! The power of BDDOrtus Solutions, Corp

╠²

Testing doesn't have to be scary! Testing Paralysis is real! Join us for a deep dive into TestBox, the powerful BDD/TDD testing framework. Learn how to write clean, fluent tests, automate your workflows, and banish bugs with confidence. Whether you're new to testing or a seasoned pro, this session will equip you with the tools to kill off that paralysis and win!

Digital Nepal Framework 2.0: A Step Towards a Digitally Empowered Nepal

Digital Nepal Framework 2.0: A Step Towards a Digitally Empowered NepalICT Frame Magazine Pvt. Ltd.

╠²

Expert Systems: Definition, Functioning, and Development Approach - [Part: 2]

- 1. Artificial Intelligence Expert Systems: Definition, Functioning, and Development Approach - [Part: 2] Dr. DEGHA Houssem Eddine March 17, 2025 Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 1/17

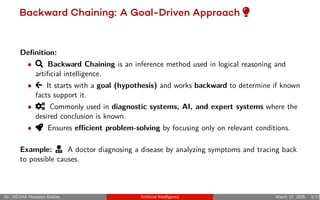

- 3. Backward Chaining: A Goal-Driven Approach ─Ä Definition: ŌĆó f Backward Chaining is an inference method used in logical reasoning and artificial intelligence. ŌĆó ┬▒ It starts with a goal (hypothesis) and works backward to determine if known facts support it. ŌĆó ├É Commonly used in diagnostic systems, AI, and expert systems where the desired conclusion is known. ŌĆó ┼ā Ensures e’¼Ćicient problem-solving by focusing only on relevant conditions. Example: ─Å A doctor diagnosing a disease by analyzing symptoms and tracing back to possible causes. Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 3/17

- 4. Backward Chaining: Thinking in Reverse How it Works: ŌĆó Starts with a goal: Verifies its validity by analyzing prior conditions. ŌĆó Used in AI: Applied in decision support systems and logical reasoning. ŌĆó E’¼Ćicient Processing: Focuses only on relevant information to reach conclusions. Example Scenario: AI diagnosing a network issue by tracing root causes step by step. Key Benefit: Increases e’¼Ćiciency by avoiding unnecessary data exploration. Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 4/17

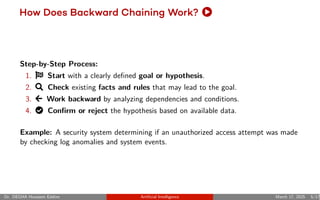

- 5. How Does Backward Chaining Work? Ŏ Step-by-Step Process: 1. İ Start with a clearly defined goal or hypothesis. 2. f Check existing facts and rules that may lead to the goal. 3. ± Work backward by analyzing dependencies and conditions. 4. ¬ Confirm or reject the hypothesis based on available data. Example: A security system determining if an unauthorized access attempt was made by checking log anomalies and system events. Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 5/17

- 6. Data and Relationships Papers and Topics: ŌĆó Paper A: ŌĆØDeep Learning in NLPŌĆØ ŌĆó Paper B: ŌĆØNeural Network BasicsŌĆØ ŌĆó Paper C: ŌĆØAdvanced NLP ModelsŌĆØ ŌĆó Paper D: ŌĆØAttention MechanismsŌĆØ ŌĆó Paper E: ŌĆØTransformer NetworksŌĆØ ŌĆó Paper F: ŌĆØRenewable Energy SystemsŌĆØ ŌĆó Paper G: ŌĆØSolar Power InnovationsŌĆØ ŌĆó Paper H: ŌĆØWind Energy OptimizationŌĆØ Known Relationships: ŌĆó cites(PaperA, PaperB) ŌĆó cites(PaperA, PaperC) ŌĆó cites(PaperB, PaperD) ŌĆó coCited(PaperC, PaperD) ŌĆó coCited(PaperD, PaperE) ŌĆó cites(PaperE, PaperF) ŌĆó coCited(PaperF, PaperG) ŌĆó cites(PaperG, PaperH) Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 6/17

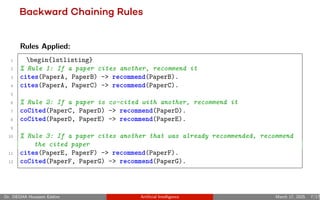

- 7. Backward Chaining Rules Rules Applied: 1 begin{lstlisting} 2 % Rule 1: If a paper cites another, recommend it 3 cites(PaperA, PaperB) -> recommend(PaperB). 4 cites(PaperA, PaperC) -> recommend(PaperC). 5 6 % Rule 2: If a paper is co-cited with another, recommend it 7 coCited(PaperC, PaperD) -> recommend(PaperD). 8 coCited(PaperD, PaperE) -> recommend(PaperE). 9 10 % Rule 3: If a paper cites another that was already recommended, recommend the cited paper 11 cites(PaperE, PaperF) -> recommend(PaperF). 12 coCited(PaperF, PaperG) -> recommend(PaperG). Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 7/17

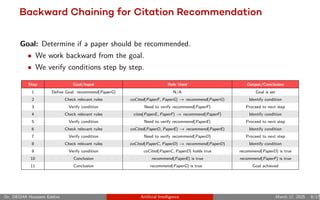

- 8. Backward Chaining for Citation Recommendation Goal: Determine if a paper should be recommended. ŌĆó We work backward from the goal. ŌĆó We verify conditions step by step. Step Goal/Input Rule Used Output/Conclusion 1 Define Goal: recommend(PaperG) N/A Goal is set 2 Check relevant rules coCited(PaperF, PaperG) ŌåÆ recommend(PaperG) Identify condition 3 Verify condition Need to verify recommend(PaperF) Proceed to next step 4 Check relevant rules cites(PaperE, PaperF) ŌåÆ recommend(PaperF) Identify condition 5 Verify condition Need to verify recommend(PaperE) Proceed to next step 6 Check relevant rules coCited(PaperD, PaperE) ŌåÆ recommend(PaperE) Identify condition 7 Verify condition Need to verify recommend(PaperD) Proceed to next step 8 Check relevant rules coCited(PaperC, PaperD) ŌåÆ recommend(PaperD) Identify condition 9 Verify condition coCited(PaperC, PaperD) holds true recommend(PaperD) is true 10 Conclusion recommend(PaperE) is true recommend(PaperF) is true 11 Conclusion recommend(PaperG) is true ’┐Į Goal achieved Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 8/17

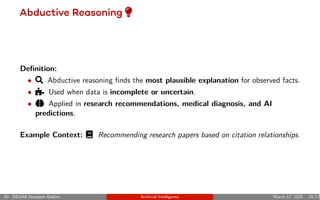

- 10. Abductive Reasoning ─Ä Definition: ŌĆó f Abductive reasoning finds the most plausible explanation for observed facts. ŌĆó ─Š Used when data is incomplete or uncertain. ŌĆó ═ō Applied in research recommendations, medical diagnosis, and AI predictions. Example Context: ŌĆĪ Recommending research papers based on citation relationships. Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 10/17

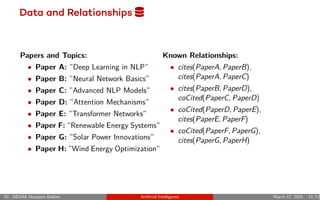

- 11. Data and Relationships Ųü Papers and Topics: ŌĆó Paper A: ŌĆØDeep Learning in NLPŌĆØ ŌĆó Paper B: ŌĆØNeural Network BasicsŌĆØ ŌĆó Paper C: ŌĆØAdvanced NLP ModelsŌĆØ ŌĆó Paper D: ŌĆØAttention MechanismsŌĆØ ŌĆó Paper E: ŌĆØTransformer NetworksŌĆØ ŌĆó Paper F: ŌĆØRenewable Energy SystemsŌĆØ ŌĆó Paper G: ŌĆØSolar Power InnovationsŌĆØ ŌĆó Paper H: ŌĆØWind Energy OptimizationŌĆØ Known Relationships: ŌĆó cites(PaperA, PaperB), cites(PaperA, PaperC) ŌĆó cites(PaperB, PaperD), coCited(PaperC, PaperD) ŌĆó coCited(PaperD, PaperE), cites(PaperE, PaperF) ŌĆó coCited(PaperF, PaperG), cites(PaperG, PaperH) Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 11/17

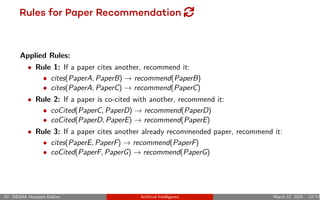

- 12. Rules for Paper Recommendation { Applied Rules: ŌĆó Rule 1: If a paper cites another, recommend it: ŌĆó cites(PaperA, PaperB) ŌåÆ recommend(PaperB) ŌĆó cites(PaperA, PaperC) ŌåÆ recommend(PaperC) ŌĆó Rule 2: If a paper is co-cited with another, recommend it: ŌĆó coCited(PaperC, PaperD) ŌåÆ recommend(PaperD) ŌĆó coCited(PaperD, PaperE) ŌåÆ recommend(PaperE) ŌĆó Rule 3: If a paper cites another already recommended paper, recommend it: ŌĆó cites(PaperE, PaperF) ŌåÆ recommend(PaperF) ŌĆó coCited(PaperF, PaperG) ŌåÆ recommend(PaperG) Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 12/17

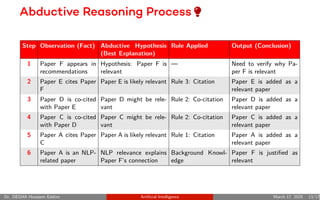

- 13. Abductive Reasoning Process ─Ä Step Observation (Fact) Abductive Hypothesis (Best Explanation) Rule Applied Output (Conclusion) 1 Paper F appears in recommendations Hypothesis: Paper F is relevant ŌĆö Need to verify why Pa- per F is relevant 2 Paper E cites Paper F Paper E is likely relevant Rule 3: Citation Paper E is added as a relevant paper 3 Paper D is co-cited with Paper E Paper D might be rele- vant Rule 2: Co-citation Paper D is added as a relevant paper 4 Paper C is co-cited with Paper D Paper C might be rele- vant Rule 2: Co-citation Paper C is added as a relevant paper 5 Paper A cites Paper C Paper A is likely relevant Rule 1: Citation Paper A is added as a relevant paper 6 Paper A is an NLP- related paper NLP relevance explains Paper FŌĆÖs connection Background Knowl- edge Paper F is justified as relevant Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 13/17

- 15. ─ÄInductive Reasoning: Concept Definition: Inductive reasoning generalizes from specific observations to broader principles or rules. It is useful when patterns or trends are observed in the data, leading to predictions. Key Characteristics: ŌĆó fUsed for pattern recognition and trend analysis. ŌĆó ├ÉHelps in forming hypotheses and theories. ŌĆó ├ŹApplied in AI, machine learning, and scientific research. Example: If multiple papers show a correlation between Transformer Networks and high NLP performance, we can generalize that Transformers are effective for NLP tasks. Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 15/17

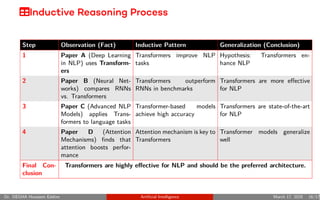

- 16. ├╗Inductive Reasoning Process Step Observation (Fact) Inductive Pattern Generalization (Conclusion) 1 Paper A (Deep Learning in NLP) uses Transform- ers Transformers improve NLP tasks Hypothesis: Transformers en- hance NLP 2 Paper B (Neural Net- works) compares RNNs vs. Transformers Transformers outperform RNNs in benchmarks Transformers are more effective for NLP 3 Paper C (Advanced NLP Models) applies Trans- formers to language tasks Transformer-based models achieve high accuracy Transformers are state-of-the-art for NLP 4 Paper D (Attention Mechanisms) finds that attention boosts perfor- mance Attention mechanism is key to Transformers Transformer models generalize well Final Con- clusion Transformers are highly effective for NLP and should be the preferred architecture. Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 16/17

- 17. ┬¼Benefits of Inductive Reasoning Why Use Inductive Reasoning? ŌĆó ═ōDiscovers hidden patterns in large datasets. ŌĆó ŲźPredicts future trends based on past data. ŌĆó ─ÄForms general theories from real-world observations. ŌĆó ├ÉApplies to AI and machine learning for model training. Example: The discovery of TransformersŌĆÖ success in NLP led to their widespread adoption in AI applications. Dr. DEGHA Houssem Eddine Artificial Intelligence March 17, 2025 17/17