Linked Open Services @ SemData2010

- 1. Linked Data Meets Services and Processes:Linked Open ServicesBarry Norton, RetoKrummenacherSemData@ESWC, May 30, 2010

- 2. AgendaState of the art in combination of Linked Open Data and servicesServices over the LOD Cloud(SWS) Service descriptions in the LOD CloudWhy not just SWS?Linked Open ServicesOutlook2Linked Open ServicesDr. Barry Norton30.05.2010

- 3. State of the Art ¨C GeoNames.orgLinked Open ServicesDr. Barry Norton330.05.2010

- 4. State of the Art ¨C GeoNames.org ServicesLinked Open ServicesDr. Barry Norton430.05.2010

- 5. State of the Art ¨C GeoNames.org ServicesLinked Open ServicesDr. Barry Norton530.05.2010

- 6. State of the Art ¨C GeoNames.org Weather ServiceLinked Open ServicesDr. Barry Norton630.05.2010

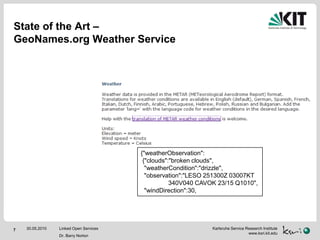

- 7. State of the Art ¨C GeoNames.org Weather Service{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Linked Open ServicesDr. Barry Norton730.05.2010

- 8. State of the Art ¨C GeoNames.org Weather Service{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Linked Open ServicesDr. Barry Norton830.05.2010

- 9. State of the Art ¨C Combination of LOD & ServicesLast SemData Workshop presented ¡®Linked Services¡¯, which are the exposure of service descriptions as LODService model based on ¡®Minimal Service Model¡¯, which is ¡°SAWSDL in RDF¡±:¡®De-XMLised¡¯ (WSDL) RPC model in RDF(S)Ontology/vocabulary classification of inputs/outputsPointer to ¡®lifting and lowering schemas¡¯turn XML-based messages into instances of these classesLinked Open ServicesDr. Barry Norton930.05.2010

- 10. JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Why not just SWS?RDFSWeatherObservationXSPARQLReportCloudReportWindReportRDF [ rdf:value "30¡°^^xsd:int; # liftingrdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1030.05.2010

- 11. JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Why not just SWS?RDFSWeatherObservationXSPARQLReportCloudReportWindReportRDF [ rdf:value ??? # liftingrdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1130.05.2010

- 12. JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODRDF(S)WeatherObservationXSPARQLReportCloudReportWindReportRDF [ rdf:value :brokenClouds # liftingrdf:type :WindReport #classification]:brokenCloudsrdf:value ¡°broken clouds¡±@en;rdf:value ¡°§â§Ñ§Ù§Ò§Ú§ä§Ú §à§Ò§İ§Ñ§è§Ú¡°@bg.Linked Open ServicesDr. Barry Norton1230.05.2010

- 13. JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,RDF(S)Services as LODWeatherObservationXSPARQLReportCloudReportWindReportRDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]:brokenCloudsrdf:value ¡°broken clouds¡±@en;rdf:value ¡°§â§Ñ§Ù§Ò§Ú§ä§Ú §à§Ò§İ§Ñ§è§Ú¡°@bg.Linked Open ServicesDr. Barry Norton1330.05.2010

- 14. JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODXSPARQLWhere?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1430.05.2010

- 15. JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODXSPARQLWhere? Says who?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1530.05.2010

- 16. JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODImplicit relationship of input and outputXSPARQLWhere? Says who?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1630.05.2010

- 17. JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODImplicit relationship of input and outputImplicit in interaction with particular serviceXSPARQLWhere? Says who?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1730.05.2010

- 18. JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODImplicit relationship of input and outputImplicit in interaction with particular serviceXSPARQLWhere? Says who?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Simply lifting I/O does not capture knowledge contributionof service executionLinked Open ServicesDr. Barry Norton1830.05.2010

- 19. Linked Open Services (Principles/Manifesto)Describe and expose services as LOD prosumersDescribe inputs and output as SPARQLgraph patternsExpose RESTfully with negotiable RDFEncode implicit knowledge in knowledge contributionEncode using SPARQL CONSTRUCTsBuilds LOD-friendly processes:Conditions ¨C SPARQL ASKsIteration ¨C SPARQL SELECTsLinked Open ServicesDr. Barry Norton1930.05.2010

- 20. LOS! ExamplePOST /examples/weatherICAOHost: www.linkedopenservices.orgContent-Type: application/rdf+xml<rdf:RDF ...> <geonames:City about="http://www.geonames.org/.../Vienna">...</rdf:RDF>@prefix geonamesCities:<...>[geonamesCities:vienna :weatherCondition [:cloudReport :brokenClouds; :windReport [rdf:value "20¡°^^xsd:int ; unit:kph]](+ reification for provenance)¡°§â§Ñ§Ù§Ò§Ú§ä§Ú §à§Ò§İ§Ñ§è§Ú¡°@bg.Linked Open ServicesDr. Barry Norton2030.05.2010

- 21. OutlookLinked Open Services Tutorial @ ISWCLinkedOpenServices.org/examplesDescriptions of real servicesLinkedOpenServices.org/nsService and process modelsLinkedOpenServices.org/blogRSS feed of developmentsLinkedOpenServices.org/wikiOpen developmentLinked Open ServicesDr. Barry Norton2130.05.2010

![JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Why not just SWS?RDFSWeatherObservationXSPARQLReportCloudReportWindReportRDF [ rdf:value "30¡°^^xsd:int; # liftingrdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1030.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-10-320.jpg)

![JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Why not just SWS?RDFSWeatherObservationXSPARQLReportCloudReportWindReportRDF [ rdf:value ??? # liftingrdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1130.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-11-320.jpg)

![JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODRDF(S)WeatherObservationXSPARQLReportCloudReportWindReportRDF [ rdf:value :brokenClouds # liftingrdf:type :WindReport #classification]:brokenCloudsrdf:value ¡°broken clouds¡±@en;rdf:value ¡°§â§Ñ§Ù§Ò§Ú§ä§Ú §à§Ò§İ§Ñ§è§Ú¡°@bg.Linked Open ServicesDr. Barry Norton1230.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-12-320.jpg)

![JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,RDF(S)Services as LODWeatherObservationXSPARQLReportCloudReportWindReportRDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]:brokenCloudsrdf:value ¡°broken clouds¡±@en;rdf:value ¡°§â§Ñ§Ù§Ò§Ú§ä§Ú §à§Ò§İ§Ñ§è§Ú¡°@bg.Linked Open ServicesDr. Barry Norton1330.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-13-320.jpg)

![JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODXSPARQLWhere?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1430.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-14-320.jpg)

![JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODXSPARQLWhere? Says who?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1530.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-15-320.jpg)

![JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODImplicit relationship of input and outputXSPARQLWhere? Says who?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1630.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-16-320.jpg)

![JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODImplicit relationship of input and outputImplicit in interaction with particular serviceXSPARQLWhere? Says who?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Linked Open ServicesDr. Barry Norton1730.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-17-320.jpg)

![JSON{"weatherObservation": {"clouds":"broken clouds", "weatherCondition":"drizzle", "observation":"LESO 251300Z 03007KT 340V040 CAVOK 23/15 Q1010", "windDirection":30,Services as LODImplicit relationship of input and outputImplicit in interaction with particular serviceXSPARQLWhere? Says who?RDF [ rdf:value "30¡°^^xsd:int; # lifting<http://www.w3.org/2007/ont/unit/UnitName> ... # implicit knowledgerdf:type :WindReport #classification]Simply lifting I/O does not capture knowledge contributionof service executionLinked Open ServicesDr. Barry Norton1830.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-18-320.jpg)

![LOS! ExamplePOST /examples/weatherICAOHost: www.linkedopenservices.orgContent-Type: application/rdf+xml<rdf:RDF ...> <geonames:City about="http://www.geonames.org/.../Vienna">...</rdf:RDF>@prefix geonamesCities:<...>[geonamesCities:vienna :weatherCondition [:cloudReport :brokenClouds; :windReport [rdf:value "20¡°^^xsd:int ; unit:kph]](+ reification for provenance)¡°§â§Ñ§Ù§Ò§Ú§ä§Ú §à§Ò§İ§Ñ§è§Ú¡°@bg.Linked Open ServicesDr. Barry Norton2030.05.2010](https://image.slidesharecdn.com/semdata2010linkedopenservices-100531091010-phpapp01/85/Linked-Open-Services-SemData2010-20-320.jpg)